Teachers and Examiners (CBSESkillEduction) collaborated to create the Evaluation Class 10 Notes. All the important Information are taken from the NCERT Textbook Artificial Intelligence (417).

Evaluation Class 10 Notes

What is evaluation?

Evaluation is the process of understanding the reliability of any AI model, based on outputs by feeding test dataset into the model and comparing with actual answers. There can be different Evaluation techniques, depending of the type and purpose of the model.

The various terms which are very important to the evaluation process.

Model Evaluation Terminologies

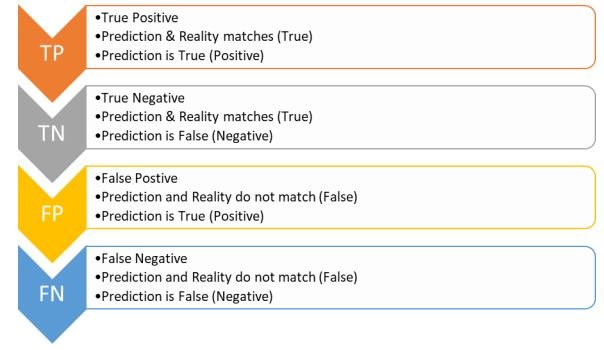

There are various new terminologies which come into the picture when we work on evaluating our

model. Let’s explore them with an example of the Forest fire scenario.

Consider developing an AI-based prediction model and deploying it in a forest that is prone to forest fires. The model’s current goal is to make predictions about whether or not a forest fire has started. We need to consider the two circumstances of prediction and reality. The reality is the actual situation in the forest at the time of the prediction, while the prediction is the machine’s output.

Here, we can see in the picture that a forest fire has broken out in the forest. The model predicts a Yes which means there is a forest fire. The Prediction matches with the Reality. Hence, this condition is termed as True Positive.

Here there is no fire in the forest hence the reality is No. In this case, the machine too has predicted it correctly as a No. Therefore, this condition is termed as True Negative.

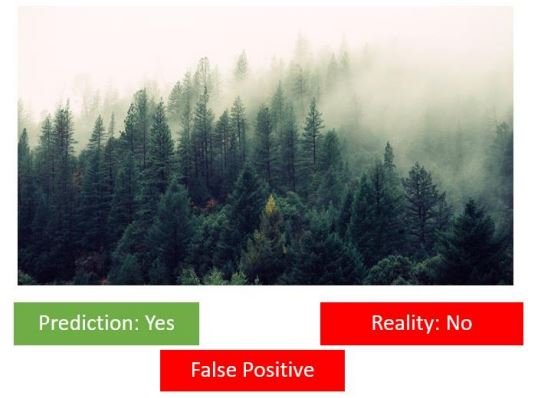

Here the reality is that there is no forest fire. But the machine has incorrectly predicted that there is a forest fire. This case is termed as False Positive.

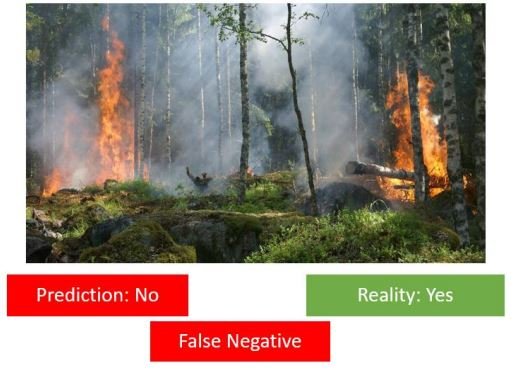

Here, a forest fire has broken out in the forest because of which the Reality is Yes but the machine has

incorrectly predicted it as a No which means the machine predicts that there is no Forest Fire.

Therefore, this case becomes False Negative.

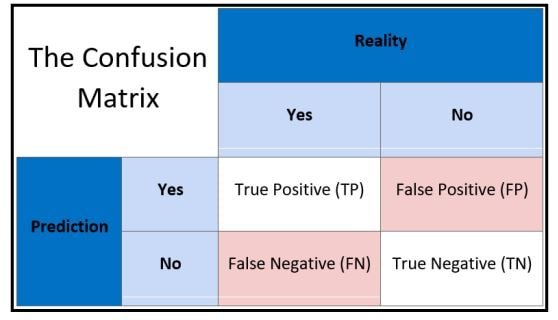

Confusion matrix

The result of comparison between the prediction and reality can be recorded in what we call the confusion matrix. The confusion matrix allows us to understand the prediction results. Note that it is not an evaluation metric but a record which can help in evaluation. Let us once again take a look at

the four conditions that we went through in the Forest Fire example:

Prediction and Reality can be easily mapped together with the help of this confusion matrix.

Evaluation Methods

Accuracy, precision, and recall are the three primary measures used to assess the success of a classification algorithm.

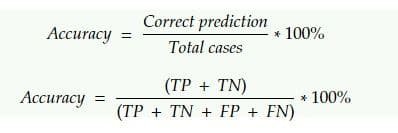

Accuracy

Accuracy allows you to count the total number of accurate predictions made by a model. The accuracy calculation is as follows: How many of the model predictions were accurate will be determined by accuracy. True Positives and True Negatives are what accuracy considers.

Here, total observations cover all the possible cases of prediction that can be True Positive (TP), True Negative (TN), False Positive (FP) and False Negative (FN).

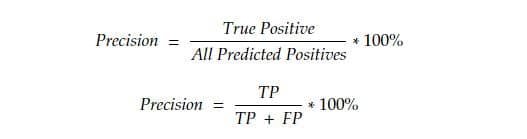

Precision

Precision is defined as the percentage of true positive cases versus all the cases where the prediction is true. That is, it takes into account the True Positives and False Positives.

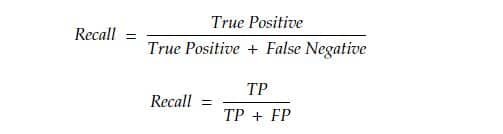

Recall

It can be described as the percentage of positively detected cases that are positive. The scenarios where a fire actually existed in reality but was either correctly or incorrectly recognised by the machine are heavily considered. That is, it takes into account both False Negatives (there was a forest fire but the model didn’t predict it) and True Positives (there was a forest fire in reality and the model anticipated a forest fire).

Which Metric is Important?

Depending on the situation the model has been deployed in, choosing between Precision and Recall is necessary. A False Negative can cost us a lot of money and put us in danger in a situation like a forest fire. Imagine there is no need for a warning, even in the case of a forest fire. The entire forest might catch fire.

Viral Outbreak is another situation in which a False Negative might be harmful. Consider a scenario in which a fatal virus has begun to spread but is not being detected by the model used to forecast viral outbreaks. The virus may infect numerous people and spread widely.

Consider a model that can determine whether a mail is spam or not. People would not read the letter if the model consistently predicted that it was spam, which could lead to the eventual loss of crucial information.

The cost of a False Positive condition in this case (predicting that a message is spam when it is not) would be high.

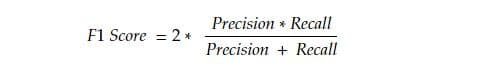

F1 Score

F1 score can be defined as the measure of balance between precision and recall.

Take a look at the formula and think of when can we get a perfect F1 score?

An ideal situation would be when we have a value of 1 (that is 100%) for both Precision and Recall. In that case, the F1 score would also be an ideal 1 (100%). It is known as the perfect value for F1 Score. As the values of both Precision and Recall ranges from 0 to 1, the F1 score also ranges from 0 to 1.

Let us explore the variations we can have in the F1 Score:

Employability skills Class 10 Notes

- Unit 1- Communication Skills Class 10 Notes

- Unit 2- Self-Management Skills Class 10 Notes

- Unit 3- Basic ICT Skills Class 10 Notes

- Unit 4- Entrepreneurial Skills Class 10 Notes

- Unit 5- Green Skills Class 10 Notes

Employability skills Class 10 MCQ

- Unit 1- Communication Skills Class 10 MCQ

- Unit 2- Self-Management Skills Class 10 MCQ

- Unit 3- Basic ICT Skills Class 10 MCQ

- Unit 4- Entrepreneurial Skills Class 10 MCQ

- Unit 5- Green Skills Class 10 MCQ

Employability skills Class 10 Questions and Answers

- Unit 1- Communication Skills Class 10 Questions and Answers

- Unit 2- Self-Management Skills Class 10 Questions and Answers

- Unit 3- Basic ICT Skills Class 10 Questions and Answers

- Unit 4- Entrepreneurial Skills Class 10 Questions and Answers

- Unit 5- Green Skills Class 10 Questions and Answers

Artificial Intelligence Class 10 Notes

- Unit 1 – Introduction to Artificial Intelligence Class 10 Notes

- Unit 2 – AI Project Cycle Class 10 Notes

- Unit 3 – Natural Language Processing Class 10 Notes

- Unit 4 – Evaluation Class 10 Notes

- Advanced Python Class 10 Notes

- Computer Vision Class 10 Notes

Artificial Intelligence Class 10 MCQ

- Unit 1 – Introduction to Artificial Intelligence Class 10 MCQ

- Unit 2 – AI Project Cycle Class 10 MCQ

- Unit 3 – Natural Language Processing Class 10 MCQ

- Unit 4 – Evaluation Class 10 MCQ